The AI Privacy Scare: New Data Shows Americans Worry AI Products Will Abuse Their Data

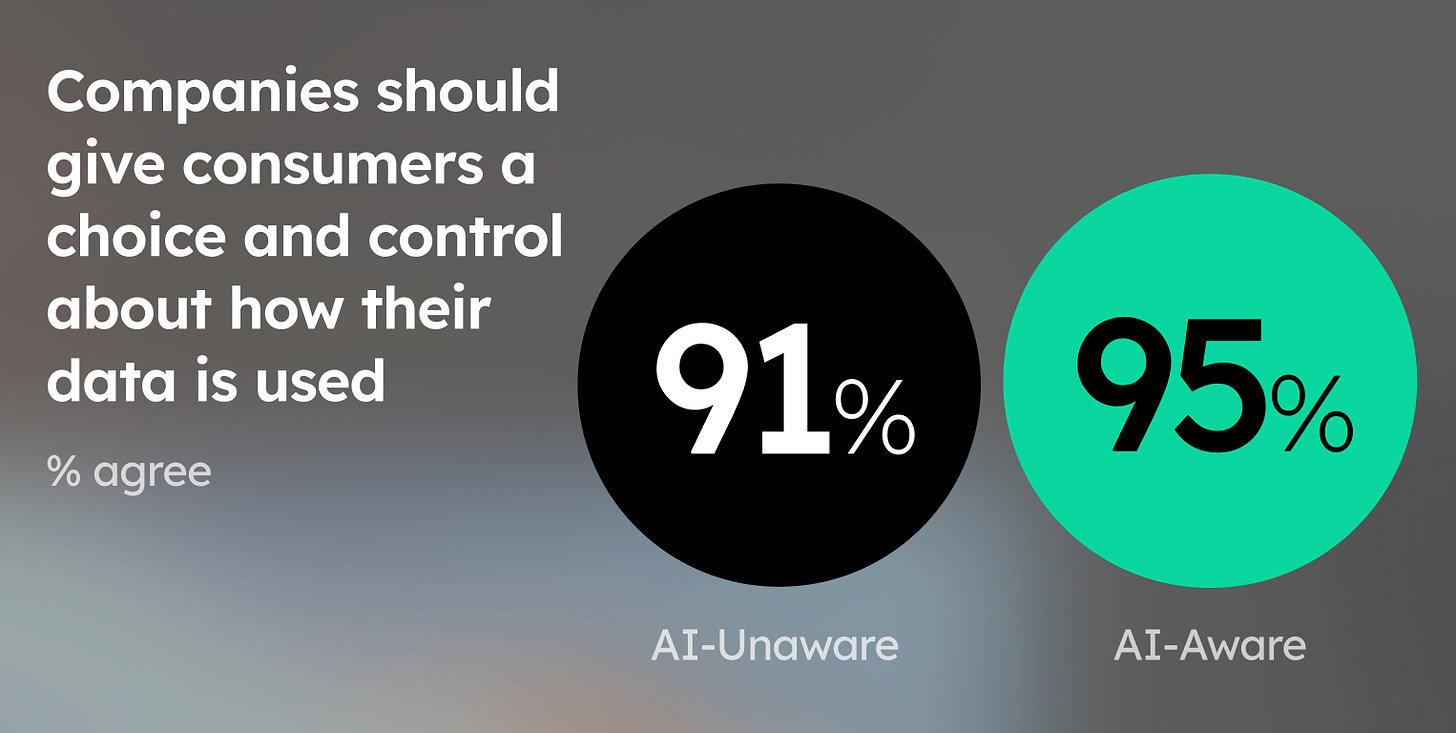

AI products are just now gaining mainstream success, but the American public is already worried about a lack of privacy protections and looking for companies to take their concerns seriously.

Results from our new national poll show Americans will deeply care about privacy in the Age of AI. Consumers want to know how their data is being used and will reward the companies that prioritize user privacy.

Welcome to all our new readers! Our reader count has recently grown, and we’re grateful to everyone who has joined us. After last week’s deep dive into our polling results, we’re now going to take a look at the poll results and how they relate to one of our 5 core principles: privacy.

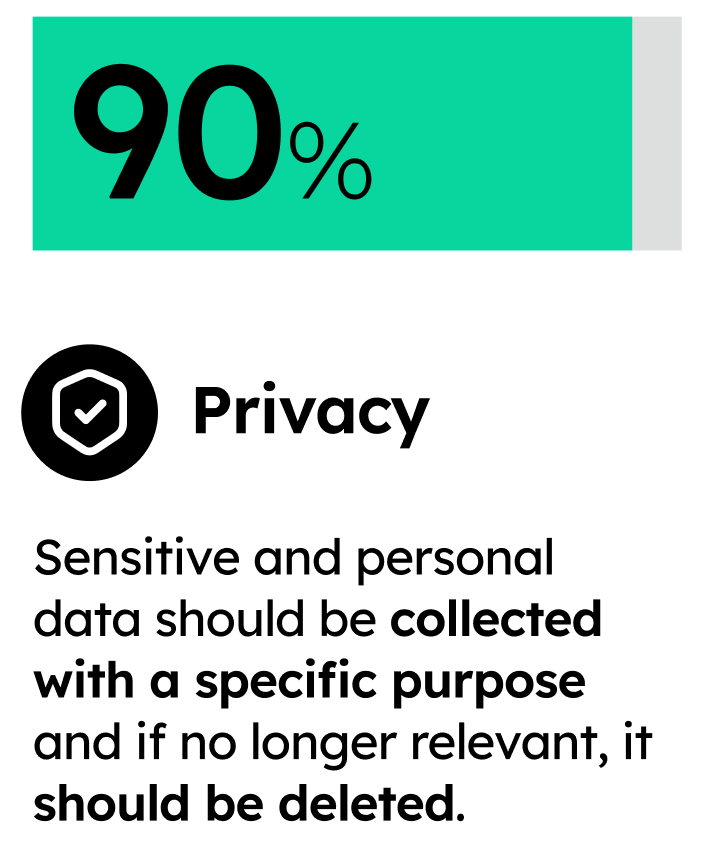

Privacy: sensitive and personal data must be collected conscientiously – and used and protected with care. If personal data is no longer relevant to the purpose for which it was collected, it should be erased.

We’ve previously had posts dedicated to privacy, but now we have fresh, unique data demonstrating how seriously consumers care about privacy in regard to AI. Here’s what we found.

Americans are Worried About Their Personal Data.

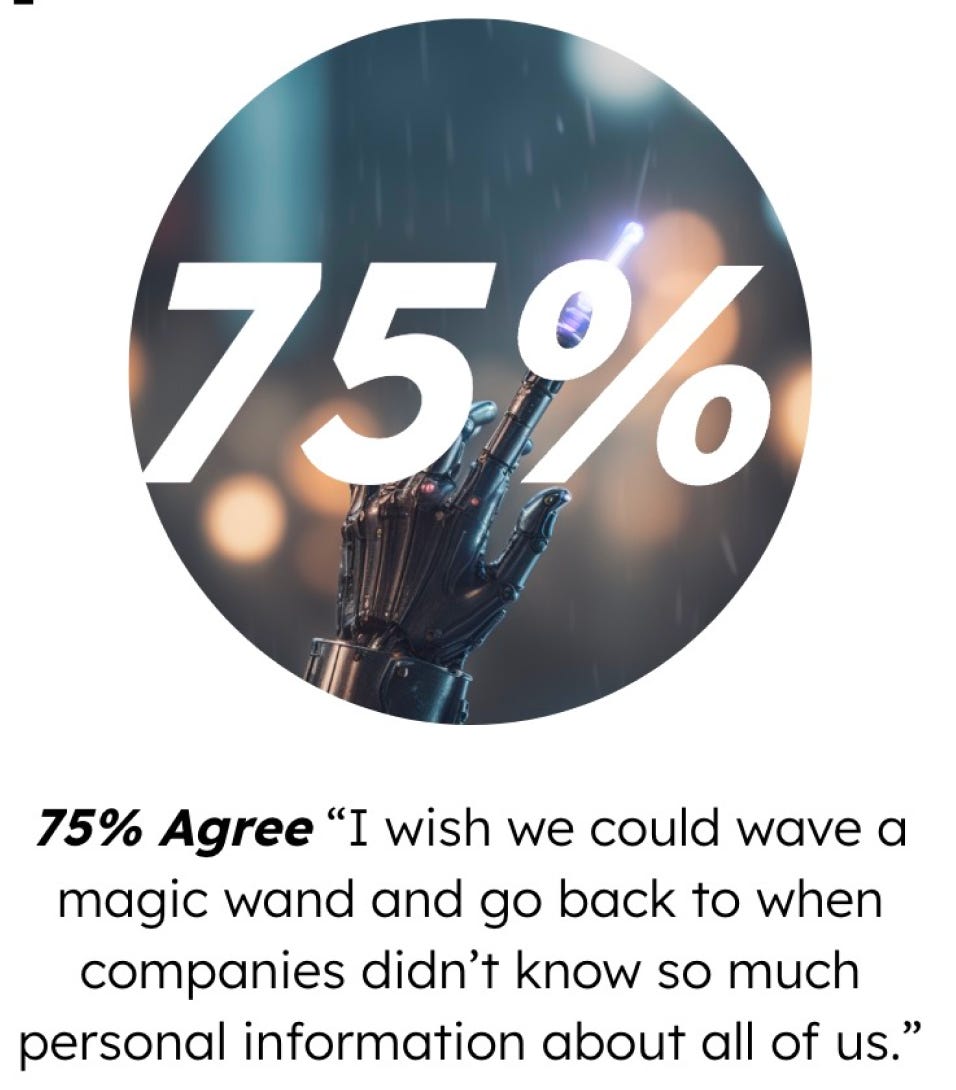

The overwhelming majority of respondents were clearly worried about how AI products will use their data. Results showed that 80% of people were concerned about AI products having access to their personal data, and 75% were worried about products being built with their personal data. No matter how you phrase the question, consumers don’t like the sound of AI leveraging personal data.

The last 10-20 years of constant abuse when it comes to personal data and people’s digital lives has taken a toll. When we asked if people agreed that, “sensitive and personal data should be collected with a specific purpose and if personal data is no longer relevant to the purpose for which it was collected, it should be deleted,” over 90% said yes. Simply put, people want caution and care when a business collects their data.

People Don’t Believe Silicon Valley’s Usual Arguments

Stop me if you’ve heard this before. The constant refrain when it comes to personal data collection is that “personalized ads and products are better for consumers.” Data concerns are dismissed. Some even go to the extent to say that consumers’ stated preferences simply don’t align with their revealed preference (that is, consumers don’t like data collection and yet willingly use digital products that collect their data anyways).

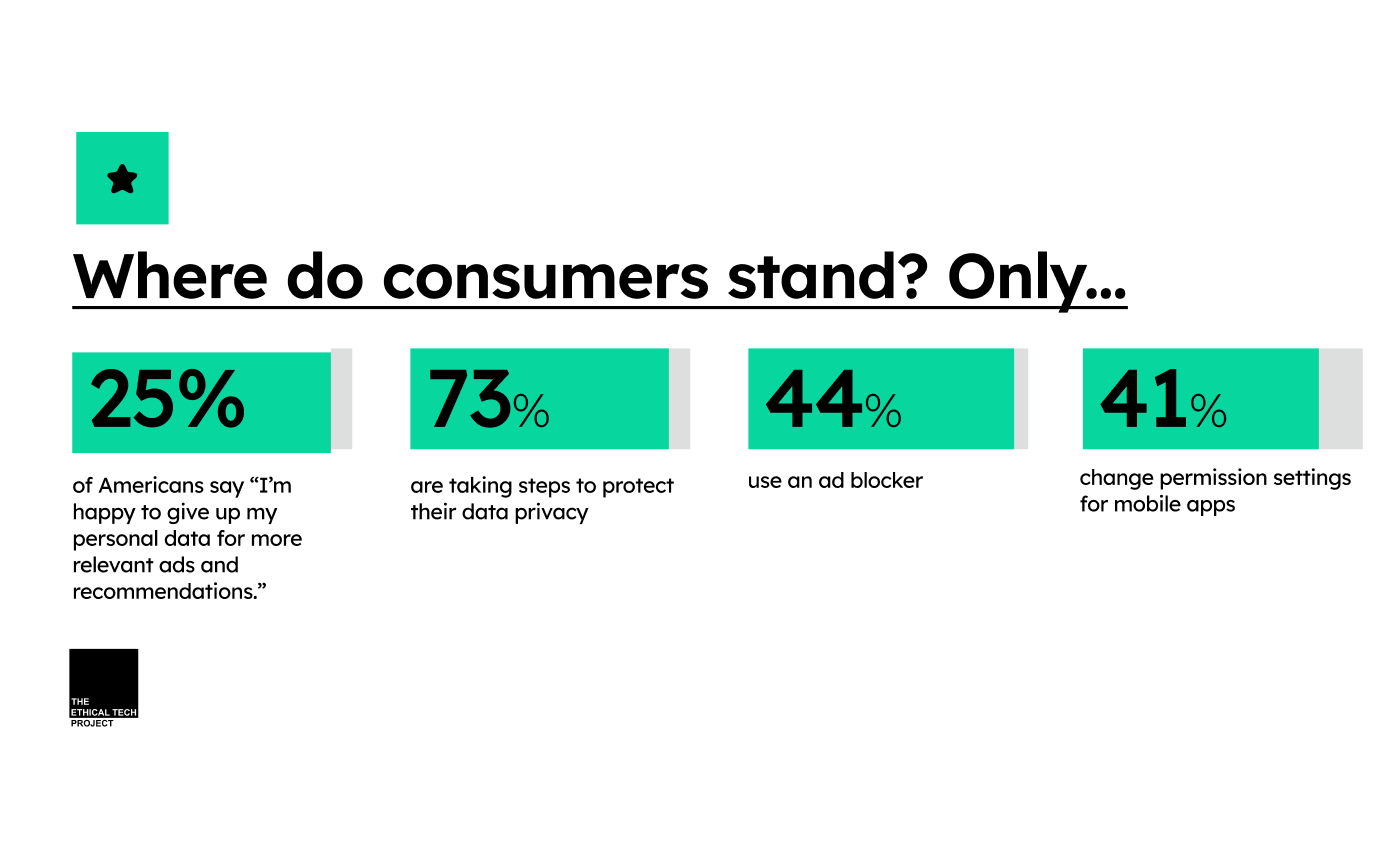

We’ll do a deep dive in a few weeks on our conjoint analysis that gets to the heart of the “revealed preference” argument, but the fact of the matter is people aren’t happy with the tradeoffs made by many tech companies and there’s no way to spin this as a positive. Only 25% of respondents agreed that “I’m happy to give up my personal data for more relevant ads and recommendations.” Consumers aren’t just unhappy. They’re taking action (showing their hand that their true revealed preference is aligned with what consumers say!). 73% of Americans are taking steps to protect their data privacy, 44% use an ad blocker, and 41% change permission settings for mobile apps.

The Big Takeaway: Consumers are demanding companies that prioritize privacy, defined as conscientious, purpose-based data collection and retention

The failures of past tech companies create an opportunity for today’s entrepreneurs and business leaders: There’s clear market demand for technology that treats user data with dignity. Building with privacy in mind is a market differentiator in a world of companies that abuse data for their own purposes. As the current rush of AI products being built prepares to hit the mass market, the ones that prioritize privacy may be the ones with an edge.

Tell us your thoughts! Do those polling numbers align with your own privacy views? What statistics surprised you the most?

What We’re Reading On Ethical (and Non-Ethical) Tech This Week:

Considering the profitability of the adtech industry, it's clear that these companies are not feeling the pinch just yet. While the consumer sentiment toward data privacy is changing, it's crucial to acknowledge that change in such a massive and entrenched industry often happens at a glacial pace. The fact that 73% of Americans are taking active steps to protect their data privacy is a strong signal of discontent. However, the key question is whether these actions will be enough to force the adtech industry to change.