What Do Americans Think of AI? Data from Our National Survey

Our new national poll of U.S. consumers shows that people are still making up their minds about AI, demonstrating the opportunity for companies to demonstrate value and mitigate risks

This week’s post is the first of several deep dives into our poll results, where we provide an in-depth analysis of how U.S. consumers are thinking about AI. This survey is the most up-to-date dataset exploring the intersection of AI and data privacy in the minds of Americans, and longer posts like these are a chance to really step back and consider public opinion and the implications for companies building AI applications.

This Week’s Big Question: What's the Current American Consensus on AI?

It’s tempting to assume that everyone is thinking about AI as much as ourselves (and you, our well-informed readers!). Of course, the conversation topics in our own bubbles don’t always reflect the rest of the country. As we’ll show in the following sections, people are still getting familiar with the use of AI technology and are cautiously optimistic (though many are undecided) about how AI will be used. Despite this uncertainty, one thing is certain: the American consumer wants their personal data protected and under their control, even if they willingly give access to AI use cases.

Some quick details: Our nationally representative poll included 2,500 respondents with representation across age, gender, race, and income. It included a set of questions as well as a method called conjoint analysis, which helped illustrate which privacy features contributed the most towards consumers’ willingness to buy. We’ll go through those results in a later post. We conducted the polling in partnership with Slingshot Strategies, a national polling firm.

People's impressions of AI are still being shaped, but their values remain the same. Consumers care about privacy and ethical data use, even while anticipating the exciting developments technology can bring Business leaders shouldn’t hold back in deploying AI into their business - but our research shows they should be cautious about the tools and controls they offer consumers whose personal data AI leverages.

Hello AI, It’s Nice to Meet You

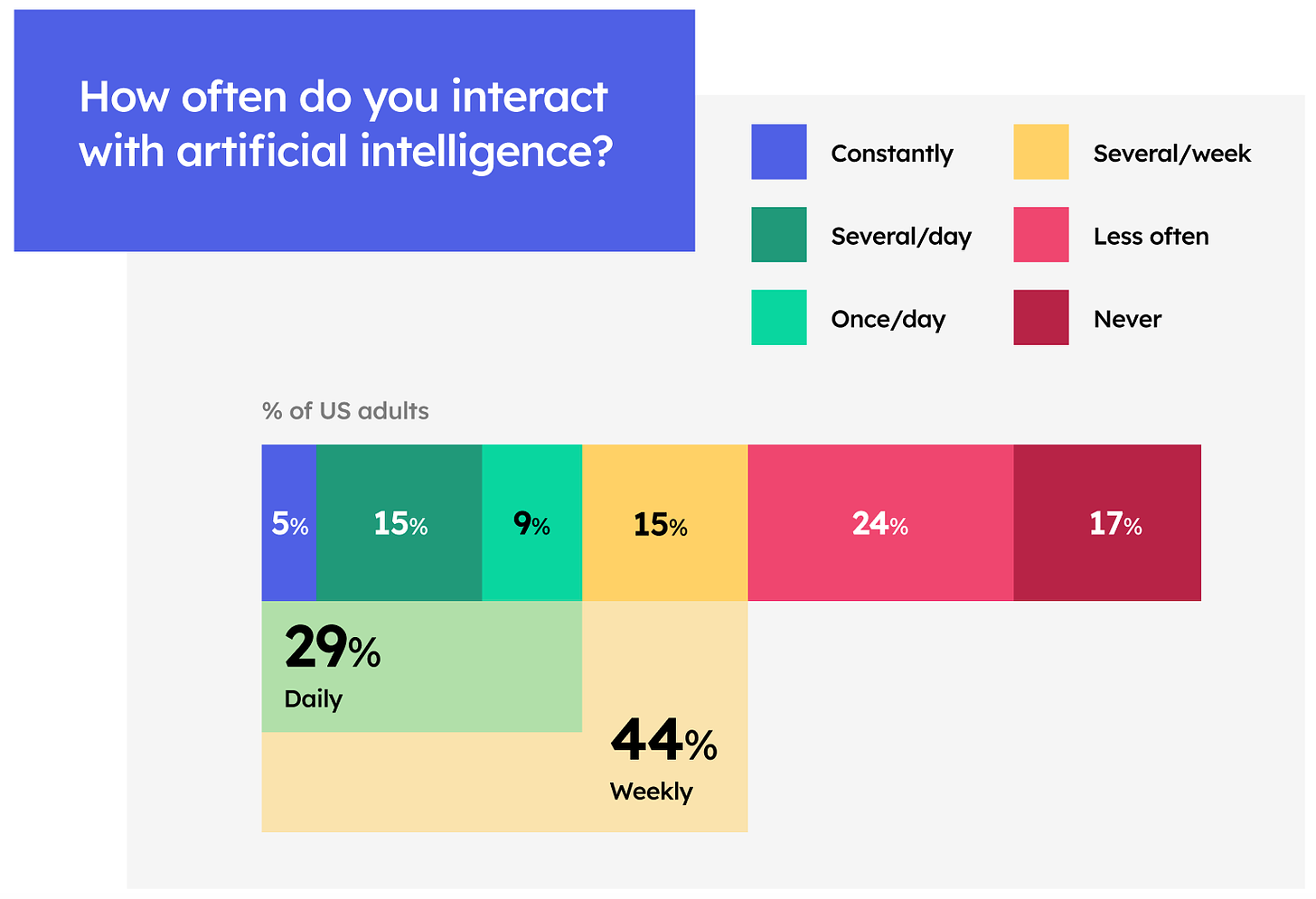

While the vast majority of consumers have encountered AI in some capacity, we’re still early in the adoption phase as people figure out how AI benefits their daily lives. Check out the question below:

First off, notice how many people have interacted with AI in some form, only 17% say they have never interacted with it. It’s remarkable that so many people have interacted with technology so early in its development. However, the catch is that what people mean by AI varies.

Our poll asked how many people have ever used ChatGPT, the poster child of the current LLM wave, and only 27% said they’ve ever used it. So while people have encountered some form of AI, most aren’t even using the tools built most recently. Turns out that while ChatGPT is indeed the fastest-growing piece of software ever, it still has a lot more Americans to reach. They’ll continue to make quick strides. Pew’s national survey in July found only 19% of Americans had used the tool. We took our poll in early September, which suggests roughly 8% of Americans (over 26 million!) were first-time users in the eight weeks between the two polls. That’s nearly 500 thousand new users a day.

Look again at the chart above, and notice that while most Americans have encountered AI in some way, a much smaller percentage of them are frequent users. Just 29% use the technology at least once a day. Most people are figuring out if and how AI products are shaping their daily lives, which will be easier as the coming wave of AI applications offers a set of valuable new use cases.

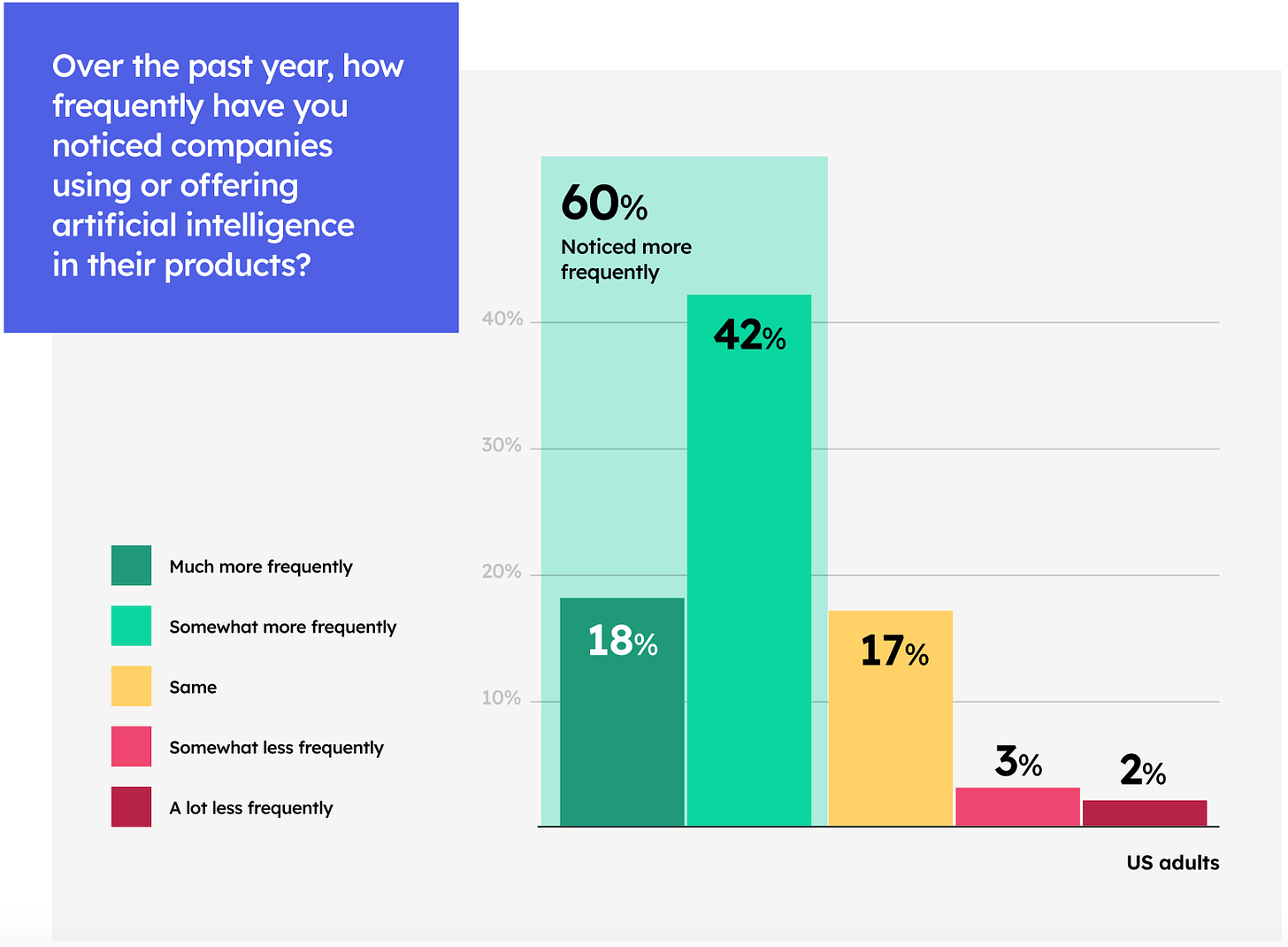

People Realize the AI Wave is Coming

Okay, so maybe everyone isn’t asking ChatGPT to reply to their texts, but even still, people are aware of how much excitement there is around AI. As shown above, 60% of Americans have noticed an increase in companies integrating AI into their products. For those of you in Silicon Valley, that number might feel low when every product launch is centered around AI. But the vast majority of the country isn’t being pitched a new ChatGPT use case at parties. Remember, less than 8% of the labor force is in tech.

So while most people don’t work in tech, the majority of Americans are seeing the hype around AI. They know new products are coming and have seen companies offering new services. The more interesting question is how people feel about the coming wave - as we show in the next question, while people overall feel favorably about AI, millions of Americans are undecided.

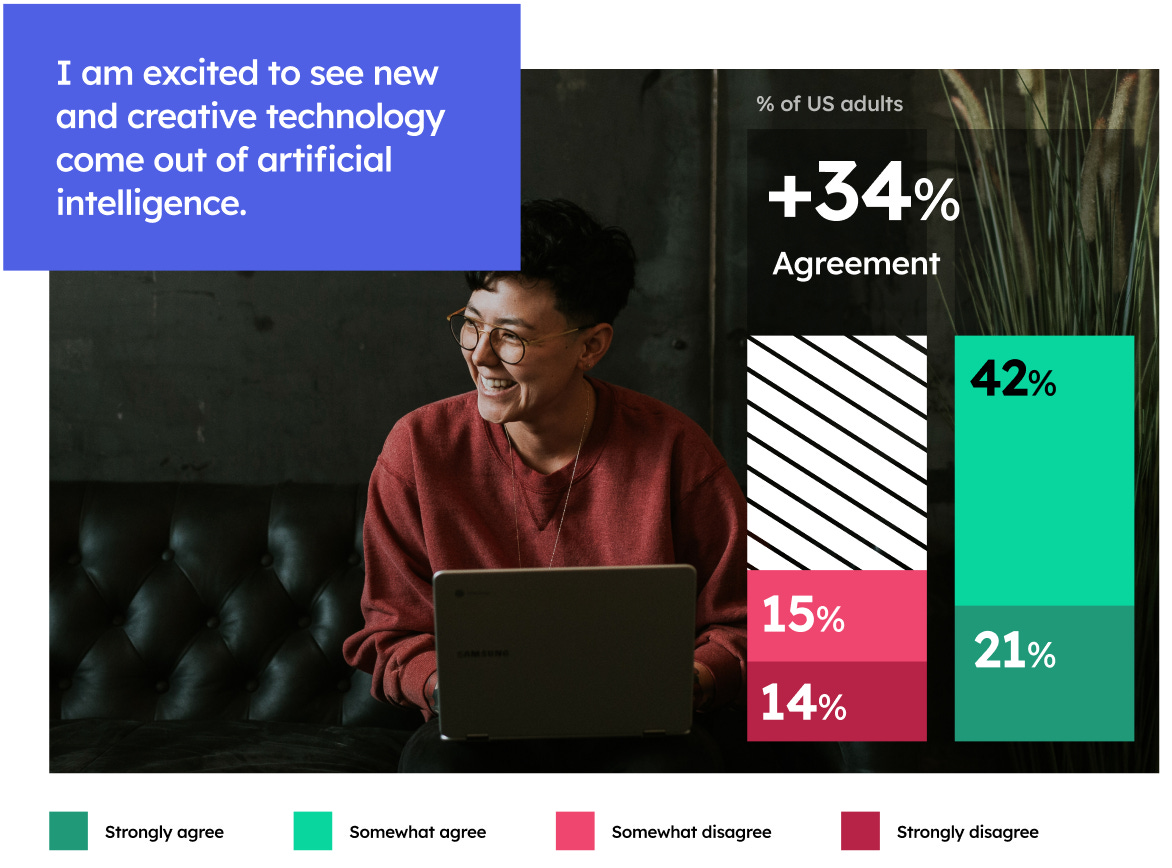

Americans Are Cautiously Optimistic About AI

Awareness of AI is one thing, but it’s important to also have a sense of the sentiment behind that awareness. Are people scared out of their minds? Thrilled? Apathetic?

Most people are excited. As shown below, nearly two-thirds of Americans are excited to see the technology that comes from AI, and only 29% aren’t excited. This is promising for companies and builders, the public is curious and wants to see what can be achieved with AI. However, that doesn’t mean they’re free of concerns or don’t see any risks.

A similar question we posed was whether consumers thought AI would benefit or harm consumers. The results were still positive but more split. While 39% saw benefits, 25% saw harm, and 34% were neutral or “not sure.” We take this to mean that people haven’t made up their minds yet, which makes sense given how early we are in AI adoption. People are eager to see what AI will lead to but aren’t sure whether we’ll end up in a positive destination.

This sentiment makes sense in light of other major waves in technology. For example, social media has been an exciting trend, and many people have eagerly wanted to see where the technology would lead, but many people now question its benefit. Between disturbing youth mental health trends, misinformation, data privacy scandals, and the collective feeling of being addicted to our phones, even the people who love social media recognize its limits.

It’s possible that as people wait to see what AI brings, their initial opinions are formed by how they view other technology. Until they see clear positive or negative use cases, people will assume that AI will be exciting but perhaps come with negative side effects. It’s worth noting that Americans’ views may be the most negative compared to global peers. Pew research in 2022 found that American adults were the most negative about the impact of social media and the internet on society compared to other countries.

This framing is important for companies to consider. Even as software engineers and technologists are wildly excited about AI’s potential, most of the American public is in the process of making up their minds and still not convinced previous eras of technological development were positive. Tools that build people’s trust will help to create more supporters, and one major AI scandal could be enough to sour the public’s outlook on our AI future. Companies should prioritize ethics to ensure they win the support of a cautiously optimistic public.

Which AI Are You Talking About?

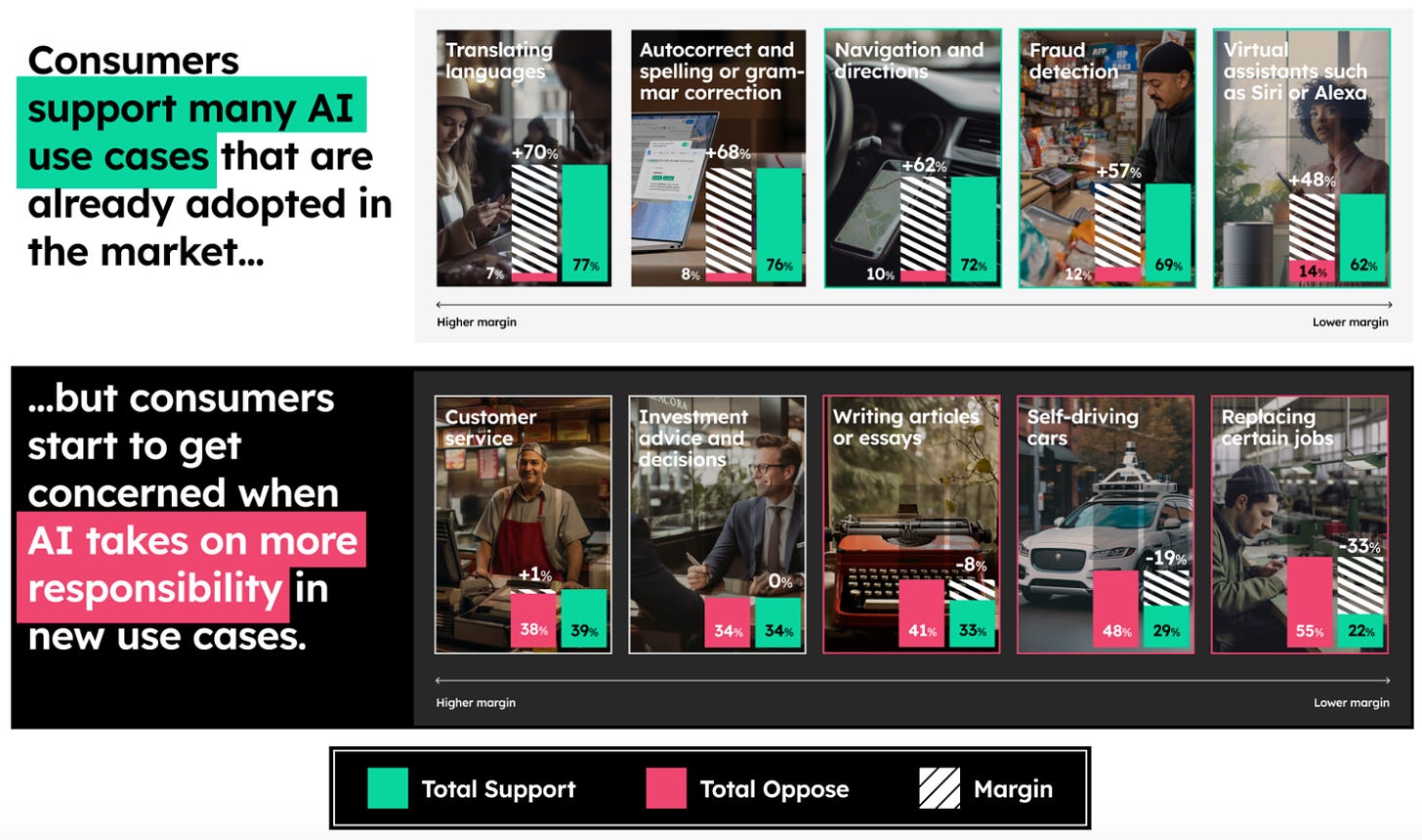

Another sign that we’re early in the AI revolution is that people still haven’t seen AI broadly applied across the economy. With only 37% of people ever having used ChatGPT, most people are still relying on their own conceptions of AI. What’s interesting is that, as shown in the chart above, people are largely comfortable with tangible AI tasks once they’ve been exposed to them.

Some of the most tangible examples of AI, such as translation services, autocorrect, detecting fraud, providing directions, and virtual assistants are viewed overwhelmingly positive. In all those instances AI isn’t a scary, looming figure, it’s a tangible solution to pain points in people’s daily lives. The more that companies can offer AI services that solve real issues for people, people will have more positive examples to draw on when asked what they think of AI.

That doesn’t mean every tangible example is positive. Self-driving cars had a surprisingly negative sentiment, but then again most people have only experienced them through media instead of in-person. Pilots have just really began to ramp up in urban centers. Unless you live in an area with AV pilots, people are likely only hearing about self-driving technology through media.

Another explanation could be that people are hesitant to allow machines to replace human tasks, and driving has always historically been performed by humans. The strongest negative polling came when people were asked whether they were comfortable with AI replacing certain jobs. People are nervous about machines making decisions that have previously been the exclusive domain of humans.

This phenomenon isn’t new. We’re living in an era of remarkable technological change, and people feel the most comfortable with what they know. This could mean that the process of transitioning certain tasks from human to machine control may require transitional compromises to put people at ease. Consider the proposal for early cars to have replica horse heads on the front to not scare horses in the streets. It’s possible future generations will find it odd that our current self-driving cars still have steering wheels, even though they’re not necessary. But in an era of transition, technologists need to take extra measures to get people comfortable with change.

People Want Choice and Control Over Their Data

While many of our questions didn’t lead to consensus, people were near-unanimous in their desire for data privacy and consumer choice. People want AI to be used in a way that honors the dignity of their data and gives them control over how their data is used.

This conclusion is the most important: people may now know how they feel about AI, but they do know how they feel about privacy. Consumers are tired of having companies break their privacy promises. People want to know that companies take their demands seriously and are committed to building ethical technology that puts people first. Below are a few questions that illustrate people’s overwhelming desire for privacy.

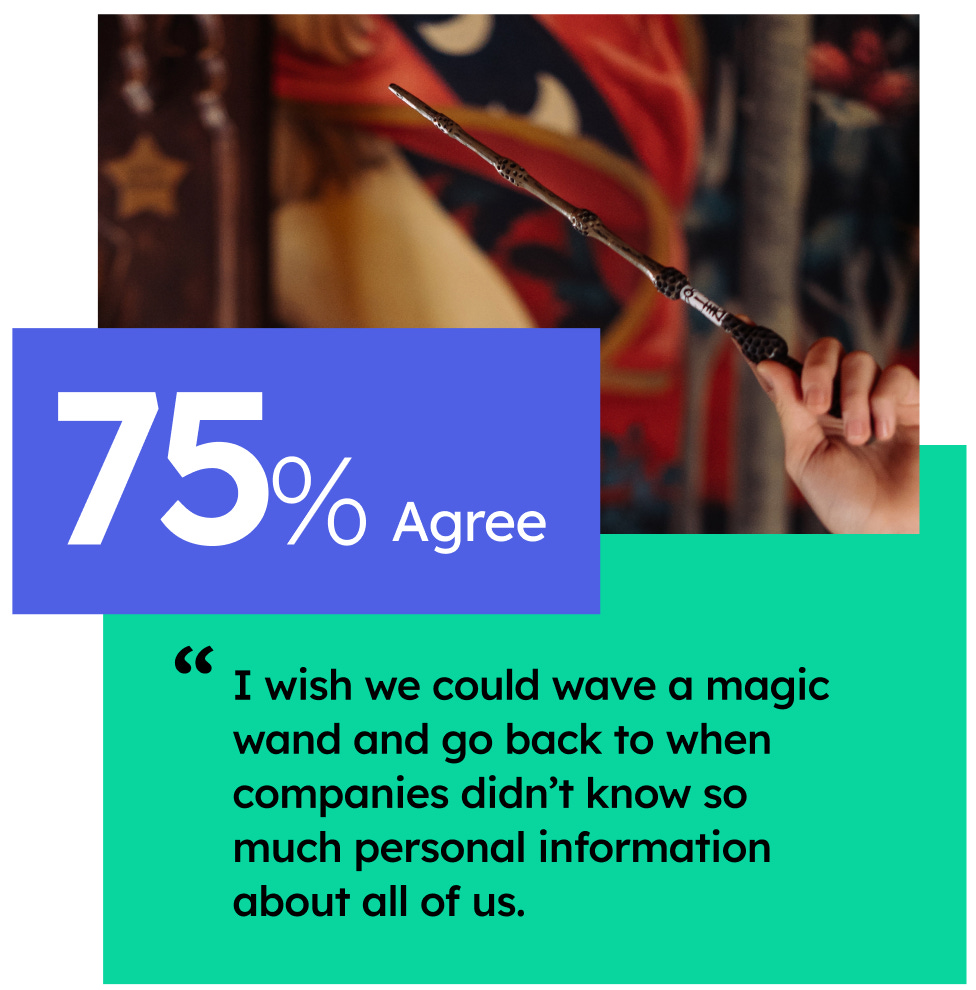

This first question aligns with the fact that people have mixed views of technology. Despite all our recent technological advancements, people wish they lived in an era of greater privacy. Our age of cell phones, social media and targeted ads has given people a mixed bundle of goods that makes them feel conflicted. While nearly every American benefits from the convenience and information access of the digital age, they wish it didn’t come at the cost of personal privacy.

Even more people agree that data privacy needs to be protected going forward. Companies can still build tech systems that treat people’s data with respect. The tools we use today likely won’t be the ones we use 20 years from now, and business leaders have the ability to usher in an age of ethical tech if they commit to building privacy by design.

This final question shows that not only is privacy ethical, it will also be good business. When 90% of people want a feature, the companies that build it are the ones that win. Winning companies in the Age of AI will be the ones that prioritize people’s needs, transparently show how data is being used, and uphold their commitments. In other words, it will be the companies that uphold the principles of privacy, agency, transparency, fairness and accountability.

Over the next 5 weeks we’ll go through each of those principles and show how the polling data demonstrates consumer interest in each of those values. We’ll also take several more deep dives into our polls to understand how people view AI when it comes to their own personal data and the extent to which consumers will reward privacy features.

Tell us your thoughts! What do you make of our first deep dive into the data? What are you most curious about regarding public opinion of AI?

What We’re Reading On Ethical (and Non-Ethical) Tech This Week:

How a billionaire-backed network of AI advisers took over Washington - Politico

US to Tighten Rules Aimed at Keeping Advanced Chips Out of China - Bloomberg

The Upshot of Microsoft’s Activision Deal: Big Tech Can Get Even Bigger - New York Times

Gary Gensler urges regulators to tame AI risks to financial stability - Financial Times